Main Page: Difference between revisions

No edit summary |

No edit summary |

||

| (334 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

[[File:Infocepo-picture.png|thumb|right|Discover cloud and AI on infocepo.com]] | |||

= infocepo.com – Cloud, AI & Labs = | |||

Bienvenue sur le portail '''infocepo.com'''. | |||

Ce wiki documente l’écosystème '''Cloud, IA, automatisation et lab''' d’Infocepo. | |||

Il s’adresse aux : | |||

* administrateurs systèmes, | |||

* ingénieurs cloud, | |||

* développeurs, | |||

* étudiants, | |||

* curieux qui veulent apprendre en pratiquant. | |||

L’objectif est simple : transformer la théorie en '''scripts réutilisables, schémas, architectures, APIs et laboratoires concrets'''. | |||

__TOC__ | |||

---- | |||

= Accès rapide = | |||

* | == Portail principal == | ||

* [https://infocepo.com infocepo.com] | |||

*. | == Assistant IA == | ||

* [https://chat.infocepo.com Chat assistant] | |||

== Liste des pages du wiki == | |||

* [[Special:AllPages|Toutes les pages]] | |||

| | |||

== Vue d’ensemble == | |||

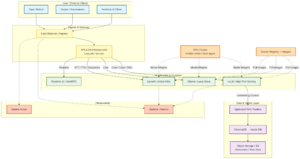

[[File:Ailab-architecture.png|thumb|'''Infra architecture overview''']] | |||

= Démarrer rapidement = | |||

== Parcours recommandés == | |||

; 1. Construire un assistant IA privé | |||

* Déployer une stack type '''Open WebUI + Ollama + GPU''' | |||

* Ajouter un modèle de chat et un modèle de résumé | |||

* Brancher des données internes via '''RAG + embeddings''' | |||

; 2. Lancer un lab cloud | |||

* Créer un petit cluster Kubernetes, OpenStack ou bare-metal | |||

* Mettre en place un pipeline de déploiement (Helm, Ansible, Terraform…) | |||

* Ajouter un service IA : transcription, résumé, chatbot, OCR… | |||

; 3. Préparer un audit ou une migration | |||

* Inventorier les serveurs avec '''ServerDiff.sh''' | |||

* Concevoir l’architecture cible | |||

* Automatiser la migration avec des scripts reproductibles | |||

== Vue d’ensemble du contenu == | |||

* '''Guides IA & outils''' : assistants, modèles, évaluation, GPU, RAG | |||

* '''Cloud & infrastructure''' : Kubernetes, OpenStack, HA, HPC, DevSecOps | |||

* '''Labs & scripts''' : audit, migration, automatisation | |||

* '''Comparatifs''' : Kubernetes vs OpenStack vs AWS vs bare-metal, etc. | |||

---- | |||

= Vision = | |||

[[File:Automation-full-vs-humans.png|thumb|right|The world after automation]] | |||

Le but à long terme est de construire un environnement où : | |||

* les assistants IA privés accélèrent la production, | |||

* les tâches répétitives sont automatisées, | |||

* les déploiements sont industrialisés, | |||

* l’infrastructure reste '''compréhensible, portable et réutilisable'''. | |||

[[File:SUMMARY-DIAGRAM-7311e6b1-aede-4989-ade2-a42d1a6e0ff2.png|thumb|right|Main page summary]] | |||

---- | |||

= Catalogue rapide des services = | |||

{| class="wikitable" | |||

|+ Services principaux | |||

! Catégorie !! Service !! Rôle | |||

|- | |||

| API || [https://api.ailab.infocepo.com:wait-2026-06 LLM] || Modèles de chat, code, RAG, OCR | |||

|- | |||

| API || [https://api-audio2txt.ailab.infocepo.com/docs STT] || Transcription audio | |||

|- | |||

| API || [https://api-txt2audio.ailab.infocepo.com/docs TTS] || Synthèse vocale | |||

|- | |||

| API || [https://github.com/ynotopec/api-realtime-ai realtime-ai] || Temps réel WebSocket / WebRTC | |||

|- | |||

| API || [https://api.ailab.infocepo.com:wait-2026-06 IMAGE2TXT] || OCR / VLM via endpoint dédié | |||

|- | |||

| API || [https://api-summary.ailab.infocepo.com:wait-2026-06/docs summary] || Résumé de textes longs | |||

|- | |||

| API || [https://text-embeddings.ailab.infocepo.com:wait-2026-06/docs text2embeddings] || Embeddings pour RAG | |||

|- | |- | ||

| | | API || [https://chromadb.ailab.infocepo.com:wait-2026-06 ChromaDB] || Base vecteur | ||

| | |||

|- | |- | ||

| | | API || [https://api-txt2image.ailab.infocepo.com/docs TXT2IMAGE] || Génération d’images | ||

| | |||

|- | |- | ||

| | | API || [https://api-diarization.ailab.infocepo.com/docs diarization] || Segmentation locuteurs | ||

| | |||

| | |||

|- | |- | ||

| Observabilité || [https://grafana.ailab.infocepo.com:wait-2026-06 monitoring] || Dashboards techniques | |||

|- | |||

| Observabilité || [https://uptime-kuma.ailab.infocepo.com:wait-2026-06/status/ai status] || Disponibilité des services | |||

|- | |||

| Observabilité || [https://web-stat.c1.ailab.infocepo.com:wait-2026-06 web-stat] || Statistiques web | |||

|- | |||

| Observabilité || [https://api.ailab.infocepo.com:wait-2026-06/ui LLM-stat] || Vue API / usage | |||

|- | |||

| Outils || [https://datalab.ailab.infocepo.com:wait-2026-06 dataLab] || Environnement de travail hors-production | |||

|- | |||

| Outils || [https://translate-rt.ailab.infocepo.com realtime translation] || Traduction | |||

|- | |||

| Outils || [https://demos.ailab.infocepo.com Demos] || Démonstrateurs | |||

|} | |} | ||

* | ---- | ||

= Nouveautés = | |||

== Nouveautés 26/04/2026 == | |||

* ajout de [https://api-tts-omnivoice.ailab.infocepo.com '''TTS Omnivoice'''] : Qualité TTS augmenté et ajout plus global des langues (600) | |||

* ajout de [https://api-lightrag.ailab.infocepo.com '''lightRAG'''] : LightRAG est un framework RAG avancé et léger qui combine graphs de connaissances et recherche vectorielle pour une analyse contextuelle profonde et efficace. | |||

* ajout de [https://api-reranker.ailab.infocepo.com '''API reranker'''] | |||

* ajout de [https://api-embedding.ailab.infocepo.com '''API embedding'''] | |||

* [https://huggingface.co/openai/privacy-filter '''privacy-filter'''] : filtrage données personnelles | |||

* Un seul fichier [https://github.com/multica-ai/andrej-karpathy-skills '''CLAUDE.md'''] inspiré d’Andrej Karpathy pour transformer Claude en un vrai ingénieur logiciel. | |||

* Ajout de '''qwen3.6''' : Qwen3.6 delivers substantial upgrades in agentic coding and thinking preservation than previous Qwen models. | |||

* [https://github.com/NousResearch/hermes-agent '''Hermes Agent'''] : l’agent qui s’améliore et grandit avec toi. | |||

* [https://github.com/ynotopec/api-audio2txt-gemma4 '''gemma4 STT'''] : API de transcription compatible OpenAI. La qualité est très bonne. Il faut comparer avec Whisper3-turbo. Il est plus gourmant en mémoire. Il ne retourne pas de "timestamp" "sentence". | |||

* [https://github.com/ynotopec/api-audio2txt-qwen3 '''qwen3 STT'''] : API de transcription compatible OpenAI. La qualité est moins bonne en français que Whisper3-turbo. Mais il faudrait tester avec d'autres langues. Il peut théoriquement prendre beaucoup de charge avec le backend actuel vLLM. | |||

* '''cohere STT''' : premiers tests non convainquants. Certainement pertinent dans la transcription monolangue, mais non adapté au multilangue. Il faut définir la langue avant transcription. Il ne retourne pas de "timestamp" "sentence". | |||

* [https://github.com/sst/opencode '''opencode'''] : CLI coder à comparer avec Aider / OpenHands. | |||

* DGX Spark : architecture CPU ARM. | |||

* Ajout de [https://github.com/ynotopec/api-convert2md '''api-convert2md'''] : extraction de tableaux pour RAG compatible Open WebUI. | |||

* Mise à jour des paramètres '''RAG optimisation'''. | |||

* Ajout de [https://github.com/ynotopec/coder-brain/blob/main/first-architecture.md '''experimental brains''']. | |||

* Ajout de [https://github.com/ynotopec/legal-agent '''legal-agent''']. | |||

* Ajout de [https://github.com/ynotopec/ai-security '''ai-security''']. | |||

* Ajout de [https://langextract.ailab.infocepo.com '''langextract'''] : démo extraction d’entités. | |||

* Ajout de [https://sam-audio.c1.ailab.infocepo.com:wait-2026-06 '''sam-audio'''] : séparation audio sémantique. | |||

* Ajout de '''API Realtime''' : WebRTC / WebSocket bidirectionnel basse latence. | |||

---- | |||

= Priorités = | |||

== Top tasks == | |||

* Ajouter [https://github.com/microsoft/presidio '''Presidio'''] : anonymisation / masquage PII, socle RGPD. | |||

* Ajouter [https://github.com/llm-d/llm-d '''llm-d'''] : blueprints + charts Kubernetes pour industrialiser les déploiements. | |||

* Ajouter [https://github.com/ai-dynamo/dynamo '''Dynamo'''] : orchestration inférence multi-nœuds. | |||

* Ajouter [https://github.com/vllm-project/guidellm '''GuideLLM'''] : capacity planning / benchmark réaliste. | |||

* Ajouter [https://github.com/NVIDIA-NeMo/Guardrails '''NeMo Guardrails'''] : garde-fous et politiques. | |||

== Backlog / veille == | |||

* OPENRAG > implement / evaluate / add OIDC | |||

* short audio transcription | |||

* translation latency > [https://github.com/ynotopec/api-realtime-ai api-realtime-ai] | |||

* RAG sur PDF avec images | |||

* compatibilité Open WebUI avec Agentic RAG | |||

* scalability | |||

* security > [https://github.com/ynotopec/ai-security ai-security] / [https://github.com/NVIDIA-NeMo/Guardrails NeMo Guardrails] | |||

* [https://github.com/openclaw/openclaw openclaw] | |||

* faster-whisper mutualisé | |||

* API classificateur IA | |||

* API résumé mutualisée | |||

* API KV (LDAP user / group) | |||

* API NER | |||

* parsing structuré docs : granite-docling + meilisearch | |||

* Temporal pour workflows critiques | |||

* [https://github.com/appwrite/appwrite appwrite] | |||

* [https://github.com/vllm-project/semantic-router semantic-router] | |||

* [https://github.com/KeygraphHQ/shannon Shannon] | |||

* [https://huggingface.co/Qwen/Qwen3-ASR-1.7B Qwen3-ASR-1.7B] | |||

* [https://huggingface.co/tencent/Youtu-VL-4B-Instruct Youtu-VL-4B-Instruct] | |||

* [https://huggingface.co/stepfun-ai/Step3-VL-10B Step3-VL-10B] | |||

* [https://huggingface.co/Qwen/Qwen3-TTS-12Hz-1.7B-CustomVoice Qwen3-TTS-12Hz-1.7B-CustomVoice] | |||

* [https://github.com/resemble-ai/chatterbox chatterbox] | |||

* deepset-ai/haystack | |||

* meilisearch | |||

* [https://huggingface.co/ibm-granite/granite-docling-258M granite-docling-258M] | |||

* Airbyte | |||

* [https://github.com/Aider-AI/aider aider] | |||

* [https://github.com/continuedev/continue continue] | |||

* OpenHands | |||

* N8N | |||

* API Compressor | |||

* LightRAG | |||

* [https://huggingface.co/Qwen/Qwen3-Omni-30B-A3B-Instruct Qwen3-Omni-30B-A3B-Instruct] | |||

* Metabase | |||

* browser-use | |||

* MCP LLM | |||

* Dify | |||

* Rasa | |||

* supabase | |||

* mem0 | |||

* DeepResearch | |||

* AppFlowy | |||

* dx8152/Qwen-Edit-2509-Multiple-angles | |||

---- | |||

= Assistants IA & outils cloud = | |||

== Assistants IA == | |||

* | ; '''ChatGPT''' | ||

* [https://chatgpt.com ChatGPT] – Assistant conversationnel public, utile pour exploration, rédaction, expérimentation rapide. | |||

* | ; '''Assistants IA auto-hébergés''' | ||

* [https://github.com/open-webui/open-webui Open WebUI] + [https://ollama.com Ollama] + GPU | |||

: Stack typique pour assistant privé, API OpenAI-compatible et expérimentation locale. | |||

* [https://github.com/ynotopec/summarize Private summary] | |||

: Outil de résumé local, rapide et hors ligne. | |||

== Développement, modèles & veille == | |||

*. | ; '''Découverte de modèles''' | ||

* [https://huggingface.co/models Models Trending] | |||

* | ; '''Évaluation & benchmarks''' | ||

* [https://arena.ai/leaderboard/code Agentic Evaluation] | |||

; '''Outils de développement & fine-tuning''' | |||

* [https://github.com/trending?since=weekly Project Trending] | |||

* [https://grok.com News search] | |||

== | == Matériel IA & GPU == | ||

* NVIDIA GH200 | |||

* | * DGX Spark | ||

* | * [https://www.mouser.fr/ProductDetail/BittWare/RS-GQ-GC1-0109?qs=ST9lo4GX8V2eGrFMeVQmFw%3D%3D GROQ LLM accelerator] | ||

* | |||

== | ---- | ||

= API Realtime AI (DEV) = | |||

'''Statut :''' environnement DEV, remplaçante prévue de l’API OpenAI pour les cas temps réel. | |||

== Configuration == | |||

{| class="wikitable" | {| class="wikitable" | ||

! Variable !! Valeur | |||

| | |- | ||

| | | OPENAI_API_BASE || <code>wss://api-realtime-ai.ailab.infocepo.com:wait-2026-06/v1</code> | ||

| | |||

| | |||

|- | |- | ||

| | | OPENAI_API_KEY || <code>sk-XXXXX</code> | ||

| | |} | ||

== Dépôt GitHub == | |||

* [https://github.com/ynotopec/api-realtime-ai ynotopec/api-realtime-ai] | |||

| | == Page de test == | ||

| | * <code>external-test/half-duplex.html</code> — annulation d’écho + mode half-duplex. | ||

== Compatibilité == | |||

Remplacer l’URL OpenAI par <code>$OPENAI_API_BASE</code> pour tester compatibilité et performances. | |||

---- | |||

= API LLM (OpenAI compatible) = | |||

* URL de base : <code>https://api.ailab.infocepo.com:wait-2026-06/v1</code> | |||

* Création du token : [https://llm-token.ailab.infocepo.com:wait-2026-06 OPENAI_API_KEY] | |||

* Documentation : [https://api.ailab.infocepo.com:wait-2026-06 Documentation API] | |||

== Liste des modèles == | |||

<pre> | |||

curl -X GET \ | |||

'https://api.ailab.infocepo.com:wait-2026-06/v1/models' \ | |||

-H 'Authorization: Bearer sk-XXXXX' \ | |||

-H 'accept: application/json' \ | |||

| jq | sed -rn 's#^.*id.*: "(.*)".*$#* \1#p' | sort -u | |||

</pre> | |||

== Modèles ouverts & endpoints internes == | |||

''Dernière mise à jour : 2026-04-20'' | |||

Les modèles ci-dessous correspondent à des '''endpoints logiques''' exposés derrière une passerelle. | |||

{| class="wikitable" | |||

! Endpoint !! Description / usage principal | |||

|- | |- | ||

| | | '''ai-multilingual''' || '''qwen3.6 fp8''' en mode '''nothink''' – multilingual | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-tools''' || '''qwen3.6 fp8''' – tâches agentiques et outils | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-thinking''' || '''qwen3.6 fp8''' – thinking | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-vision''' || '''qwen3.6 fp8''' en mode '''nothink''' – vision/OCR | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-embedding''' || '''bge-m3''' – recherche sémantique | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-stt''' || '''whisper3-turbo''' – transcription vocale multilingual | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-tts''' || '''Kokoro-82M''' – TTS multilingual limité ''(actuel, internal dev)'' | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-tts-next''' || '''OmniVoice''' – TTS multilingual en évaluation | ||

| | |||

| | |||

|- | |- | ||

| | | '''ai-image''' || '''OpenDalle''' – image génération | ||

| | |||

| | |||

|} | |} | ||

==[https:// | == Exemple bash == | ||

==[https:// | <pre> | ||

==[https:// | export OPENAI_API_MODEL="ai-chat" | ||

==[https://wikitech.wikimedia.org/wiki/Wikimedia_infrastructure | export OPENAI_API_BASE="https://api.ailab.infocepo.com:wait-2026-06/v1" | ||

== | export OPENAI_API_KEY="sk-XXXXX" | ||

*[ | |||

*[https://www.silkhom.com/barometre-2021-des-tjm-dans-informatique-digital | promptValue="Quel est ton nom ?" | ||

*[ | jsonValue='{ | ||

"model": "'${OPENAI_API_MODEL}'", | |||

"messages": [{"role": "user", "content": "'${promptValue}'"}], | |||

"temperature": 0 | |||

}' | |||

curl -k ${OPENAI_API_BASE}/chat/completions \ | |||

-H "Content-Type: application/json" \ | |||

-H "Authorization: Bearer $OPENAI_API_KEY" \ | |||

-d "${jsonValue}" 2>/dev/null | jq '.choices[0].message.content' | |||

</pre> | |||

== Vue infra LLM == | |||

[[File:Litellm-proxy-mermaid-diagram-2024-03-24-205202.png|thumb|right]] | |||

'''DEV (au choix)''' | |||

* '''A.''' <code>LiteLLM → vLLM/SgLang</code> : tests perf / compatibilité | |||

* '''B.''' <code>LiteLLM → Ollama</code> : simple, rapide à itérer | |||

* '''C.''' <code>Ollama</code> direct : POC ultra-léger | |||

'''DEV – modèle FR / résumé''' | |||

* <code>LiteLLM → Ollama /v1</code> | |||

'''PROD''' | |||

* '''Standard :''' <code>LiteLLM → vLLM/SgLang</code> | |||

* '''Pont DEV→PROD :''' <code>LiteLLM (DEV) → LiteLLM (PROD) → vLLM/SgLang</code> | |||

'''Notes :''' | |||

* '''LiteLLM''' = passerelle unique (clés, quotas, logs) | |||

* '''vLLM/SgLang''' = performance / stabilité en charge | |||

* '''Ollama''' = simplicité de prototypage | |||

---- | |||

= API Image to Text = | |||

* Utilise l’API LLM avec un endpoint adapté à l’OCR / VLM. | |||

* Modèle recommandé : <code>ai-vision</code> | |||

== Exemple bash == | |||

<pre> | |||

OPENAI_API_KEY=sk-XXXXX | |||

base64 -w0 "/path/to/image.png" > img.b64 | |||

jq -n --rawfile img img.b64 \ | |||

'{ | |||

model: "ai-vision", | |||

messages: [ | |||

{ | |||

role: "user", | |||

content: [ | |||

{ "type": "text", "text": "Décris cette image." }, | |||

{ | |||

"type": "image_url", | |||

"image_url": { "url": ("data:image/png;base64," + ($img | rtrimstr("\n"))) } | |||

} | |||

] | |||

} | |||

] | |||

}' > payload.json | |||

curl https://api.ailab.infocepo.com:wait-2026-06/v1/chat/completions \ | |||

-H "Authorization: Bearer $OPENAI_API_KEY" \ | |||

-H "Content-Type: application/json" \ | |||

--data-binary @payload.json | |||

</pre> | |||

== Exemple Python == | |||

<pre> | |||

import base64 | |||

import json | |||

import requests | |||

import os | |||

API_KEY = os.getenv("OPENAI_API_KEY") | |||

MODEL = "ai-vision" | |||

IMG_PATH = "/path/to/image.png" | |||

API_URL = "https://api.ailab.infocepo.com:wait-2026-06/v1/chat/completions" | |||

with open(IMG_PATH, "rb") as f: | |||

img_b64 = base64.b64encode(f.read()).decode("utf-8") | |||

payload = { | |||

"model": MODEL, | |||

"messages": [ | |||

{ | |||

"role": "user", | |||

"content": [ | |||

{"type": "text", "text": "Décris cette image."}, | |||

{ | |||

"type": "image_url", | |||

"image_url": {"url": f"data:image/png;base64,{img_b64}"} | |||

} | |||

] | |||

} | |||

] | |||

} | |||

headers = { | |||

"Authorization": f"Bearer {API_KEY}", | |||

"Content-Type": "application/json" | |||

} | |||

response = requests.post(API_URL, headers=headers, data=json.dumps(payload)) | |||

if response.ok: | |||

print(json.dumps(response.json(), indent=2, ensure_ascii=False)) | |||

else: | |||

print(f"Erreur {response.status_code}: {response.text}") | |||

</pre> | |||

---- | |||

= API STT = | |||

* URL : <code>https://api-audio2txt.ailab.infocepo.com/v1</code> | |||

* Clé : <code>OPENAI_API_KEY=sk-XXXXX</code> | |||

* Modèle : <code>whisper-1</code> | |||

* Documentation : [https://api-audio2txt.ailab.infocepo.com/docs API STT docs] | |||

== Exemple Python == | |||

<pre> | |||

import requests | |||

OPENAI_API_KEY = 'sk-XXXXX' | |||

url = 'https://api-audio2txt.ailab.infocepo.com/v1/audio/transcriptions' | |||

headers = { | |||

'Authorization': f'Bearer {OPENAI_API_KEY}', | |||

} | |||

files = { | |||

'file': ('file.opus', open('/path/to/file.opus', 'rb')), | |||

'model': (None, 'whisper-1') | |||

} | |||

response = requests.post(url, headers=headers, files=files) | |||

print(response.json()) | |||

</pre> | |||

== Exemple curl == | |||

<pre> | |||

[ ! -f /tmp/test.ogg ] && wget "https://upload.wikimedia.org/wikipedia/commons/1/17/Fables_de_La_Fontaine_Livre_1_01.ogg" -O /tmp/test.ogg | |||

export OPENAI_API_KEY=sk-XXXXX | |||

curl https://api-audio2txt.ailab.infocepo.com/v1/audio/transcriptions \ | |||

-H "Authorization: Bearer $OPENAI_API_KEY" \ | |||

-F model="whisper-1" \ | |||

-F file="@/tmp/test.ogg" | |||

</pre> | |||

== Notes == | |||

* Plusieurs formats audio sont acceptés. | |||

* Le flux final est normalisé en '''16 kHz mono'''. | |||

* Pour une qualité optimale : privilégier '''OPUS 16 kHz mono'''. | |||

== UI == | |||

* [https://translate-rt.ailab.infocepo.com translate-rt] | |||

---- | |||

= API TTS = | |||

* URL : <code>https://api-txt2audio.ailab.infocepo.com/v1</code> | |||

* Clé : <code>OPENAI_API_KEY=sk-XXXXX</code> | |||

* Documentation : [https://tts.ailab.infocepo.com:wait-2026-06/docs API TTS docs] | |||

== Exemple == | |||

<pre> | |||

export OPENAI_API_KEY=sk-XXXXX | |||

curl https://api-txt2audio.ailab.infocepo.com/v1/audio/speech \ | |||

-H "Authorization: Bearer $OPENAI_API_KEY" \ | |||

-H "Content-Type: application/json" \ | |||

-d '{ | |||

"model": "gpt-4o-mini-tts", | |||

"input": "Bonjour, ceci est un test de synthèse vocale.", | |||

"voice": "coral", | |||

"instructions": "Speak in a cheerful and positive tone.", | |||

"response_format": "opus" | |||

}' | ffplay -i - | |||

</pre> | |||

---- | |||

= API Text to Image = | |||

* URL : <code>https://api-txt2image.ailab.infocepo.com/v1</code> | |||

* Clé API : <code>OPENAI_API_KEY=sk-...</code> | |||

* Documentation : [https://api-txt2image.ailab.infocepo.com/docs API TXT2IMAGE docs] | |||

== Exemple == | |||

<pre> | |||

export OPENAI_API_KEY=EMPTY | |||

curl https://api-txt2image.ailab.infocepo.com/v1/images/generations \ | |||

-H "Content-Type: application/json" \ | |||

-H "Authorization: Bearer $OPENAI_API_KEY" \ | |||

-d '{ | |||

"prompt": "a photo of a happy corgi puppy sitting and facing forward, studio light, longshot", | |||

"n": 1, | |||

"size": "1024x1024" | |||

}' | |||

</pre> | |||

---- | |||

= API Diarization = | |||

* Documentation : [https://api-diarization.ailab.infocepo.com/docs API Diarization docs] | |||

== Exemple == | |||

<pre> | |||

wget "https://upload.wikimedia.org/wikipedia/commons/6/60/Mike_Peters_on_Politics_and_Emotion_%28Interview_1984%29.mp3" -O /tmp/test.mp3 | |||

curl -X POST "https://api-diarization.ailab.infocepo.com/upload-audio/" \ | |||

-H "Authorization: Bearer token1" \ | |||

-F "file=@/tmp/test.mp3" | |||

</pre> | |||

---- | |||

= API Summary = | |||

* Documentation : [https://api-summary.ailab.infocepo.com:wait-2026-06/docs API Summary docs] | |||

== Exemple == | |||

<pre> | |||

text="The tower is 324 metres tall and is one of the most recognizable monuments in the world." | |||

json_payload=$(jq -nc --arg text "$text" '{"text": $text}') | |||

curl -X POST https://api-summary.ailab.infocepo.com:wait-2026-06/summary/ \ | |||

-H "Content-Type: application/json" \ | |||

-d "$json_payload" | |||

</pre> | |||

---- | |||

= API Text Embeddings = | |||

* URL : <code>https://text-embeddings.ailab.infocepo.com:wait-2026-06</code> | |||

* Documentation : [https://text-embeddings.ailab.infocepo.com:wait-2026-06/docs Documentation] | |||

== Exemple == | |||

<pre> | |||

curl -k https://text-embeddings.ailab.infocepo.com:wait-2026-06/embed \ | |||

-X POST \ | |||

-d '{"inputs":"What is Deep Learning?"}' \ | |||

-H 'Content-Type: application/json' | |||

</pre> | |||

---- | |||

= API DB Vectors (ChromaDB) = | |||

== Production == | |||

* URL : <code>https://chromadb.ailab.infocepo.com:wait-2026-06</code> | |||

* Token : <code>XXXXX</code> | |||

== Lab == | |||

<pre> | |||

export CHROMA_HOST=https://chromadb.c1.ailab.infocepo.com:wait-2026-06 | |||

export CHROMA_PORT=443 | |||

export CHROMA_TOKEN=XXXX | |||

</pre> | |||

== Exemple curl == | |||

<pre> | |||

curl -v "${CHROMA_HOST}"/api/v1/collections \ | |||

-H "Authorization: Bearer ${CHROMA_TOKEN}" | |||

</pre> | |||

== Exemple Python == | |||

<pre> | |||

import chromadb | |||

from chromadb.config import Settings | |||

def chroma_http(host, port=80, token=None): | |||

return chromadb.HttpClient( | |||

host=host, | |||

port=port, | |||

ssl=host.startswith('https') or port == 443, | |||

settings=( | |||

Settings( | |||

chroma_client_auth_provider='chromadb.auth.token.TokenAuthClientProvider', | |||

chroma_client_auth_credentials=token, | |||

) if token else Settings() | |||

) | |||

) | |||

client = chroma_http(CHROMA_HOST, CHROMA_PORT, CHROMA_TOKEN) | |||

collections = client.list_collections() | |||

print(collections) | |||

</pre> | |||

== Déployer sa propre instance == | |||

<pre> | |||

export nameSpace=your_namespace | |||

domainRoot=ailab.infocepo.com | |||

helm repo add chroma https://amikos-tech.github.io/chromadb-chart/ | |||

helm repo update | |||

helm upgrade --install chromadb chroma/chromadb -n ${nameSpace} \ | |||

--set chromadb.apiVersion="0.4.24" \ | |||

--set ingress.enabled=true \ | |||

--set ingress.hosts[0].host="${nameSpace}-chromadb.${domainRoot}" \ | |||

--set ingress.hosts[0].paths[0].path=/ \ | |||

--set ingress.hosts[0].paths[0].pathType=ImplementationSpecific \ | |||

--set ingress.annotations."cert-manager\.io/cluster-issuer"=letsencrypt-prod \ | |||

--set ingress.tls[0].secretName=${nameSpace}-chromadb.${domainRoot}-tls \ | |||

--set ingress.tls[0].hosts[0]="${nameSpace}-chromadb.${domainRoot}" | |||

kubectl -n ${nameSpace} patch ingress/chromadb --type=json \ | |||

-p '[{"op":"add","path":"/metadata/annotations/nginx.ingress.kubernetes.io~1proxy-body-size","value":"0"}]' | |||

</pre> | |||

== Récupérer le token == | |||

<pre> | |||

kubectl --namespace ${nameSpace} get secret chromadb-auth \ | |||

-o jsonpath="{.data.token}" | base64 --decode && echo | |||

</pre> | |||

---- | |||

= Registry = | |||

* URL : [https://registry.ailab.infocepo.com:wait-2026-06 registry.ailab.infocepo.com:wait-2026-06] | |||

* Login : <code>user</code> | |||

* Password : <code>XXXXX</code> | |||

== Exemple == | |||

<pre> | |||

curl -u "user:XXXXX" https://registry.ailab.infocepo.com:wait-2026-06/v2/_catalog | |||

</pre> | |||

== Exemple K8S == | |||

<pre> | |||

deploymentName= | |||

nameSpace= | |||

kubectl -n ${nameSpace} create secret docker-registry pull-secret \ | |||

--docker-server=registry.ailab.infocepo.com:wait-2026-06 \ | |||

--docker-username=user \ | |||

--docker-password=XXXXX \ | |||

--docker-email=contact@example.com | |||

kubectl -n ${nameSpace} patch deployment ${deploymentName} \ | |||

-p '{"spec":{"template":{"spec":{"imagePullSecrets":[{"name":"pull-secret"}]}}}}' | |||

</pre> | |||

---- | |||

= Stockage objet externe (S3) = | |||

* Endpoint : <code>https://s3.ailab.infocepo.com:wait-2026-06</code> | |||

* Access key : <code>XXXX</code> | |||

* Secret key : <code>XXXX</code> | |||

Un bucket nommé <code>ORG</code> a été créé pour stocker des documents de démonstration. | |||

---- | |||

= RAG optimisation = | |||

* Embeddings : <code>BAAI/bge-m3</code> | |||

* <code>chunk_size=1200</code> | |||

* <code>chunk_overlap=100</code> | |||

* LLM : <code>qwen3.6</code> | |||

* Pour les PDF mixtes : '''PDF → image → OCR / VLM''' peut améliorer les résultats. | |||

---- | |||

= Processus usine IA = | |||

{| class="wikitable" style="width:80%;" | |||

! Étape !! Description !! Outils utilisés !! Responsable(s) | |||

|- | |||

| 1 || Idée || - || Équipe projet | |||

|- | |||

| 2 || Développement || Environnement Onyxia / lab || Équipe projet | |||

|- | |||

| 3 || Déploiement || CI/CD, GitHub, Kubernetes || Équipe DevOps | |||

|- | |||

| 4 || Surveillance || Uptime-Kuma, dashboards || Équipe DevOps | |||

|- | |||

| 5 || Alertes || Mattermost || Équipe DevOps | |||

|- | |||

| 6 || Support infrastructure || - || Équipe SRE | |||

|- | |||

| 7 || Support applicatif || - || Équipe applicative | |||

|} | |||

---- | |||

= Environnements = | |||

== Hors production == | |||

* Utiliser [https://datalab.ailab.infocepo.com:wait-2026-06 datalab] | |||

* Support : canal Mattermost Offre IA | |||

* Le pseudo utilisateur doit respecter la convention interne | |||

* Demander si besoin un accès Linux + Kubernetes | |||

== Production (best-effort) == | |||

* Publier le code applicatif, les secrets (format SOPS), le Dockerfile et le code infra (Helm ou manifests K8S) sur Git | |||

* Demander un namespace | |||

* Lire la documentation de surveillance associée | |||

== Limites de l’infrastructure == | |||

* Les charges GPU sont intentionnellement limitées en journée. | |||

---- | |||

= Cloud Lab & projets d’audit = | |||

[[File:Infocepo.drawio.png|400px|Cloud Lab reference diagram]] | |||

Le '''Cloud Lab''' fournit des scénarios reproductibles : audit d’infrastructure, migration cloud, automatisation, haute disponibilité. | |||

== Projet d’audit == | |||

; '''[[ServerDiff.sh]]''' | |||

Script Bash d’audit permettant de : | |||

* détecter les dérives de configuration, | |||

* comparer plusieurs environnements, | |||

* préparer un plan de migration ou de remédiation. | |||

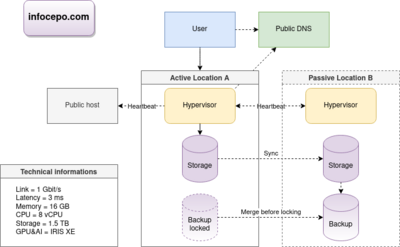

== Exemple de migration cloud == | |||

[[File:Diagram-migration-ORACLE-KVM-v2.drawio.png|400px|Cloud migration diagram]] | |||

{| class="wikitable" | |||

! Tâche !! Description !! Durée (jours) | |||

|- | |||

| Audit infrastructure || 82 services, audit automatisé via '''ServerDiff.sh''' || 1.5 | |||

|- | |||

| Diagramme d’architecture || Conception visuelle et documentation || 1.5 | |||

|- | |||

| Contrôles de conformité || 2 clouds, 6 hyperviseurs, 6 To RAM || 1.5 | |||

|- | |||

| Installation plateforme cloud || Déploiement des environnements cibles || 1.0 | |||

|- | |||

| Vérification de stabilité || Premiers tests fonctionnels || 0.5 | |||

|- | |||

| Étude d’automatisation || Identification des tâches répétitives || 1.5 | |||

|- | |||

| Développement des templates || 6 templates, 8 environnements, 2 clouds / OS || 1.5 | |||

|- | |||

| Diagramme de migration || Illustration du processus || 1.0 | |||

|- | |||

| Écriture du code de migration || 138 lignes (voir '''MigrationApp.sh''') || 1.5 | |||

|- | |||

| Stabilisation || Validation de la reproductibilité || 1.5 | |||

|- | |||

| Benchmark cloud || Comparaison vs legacy || 1.5 | |||

|- | |||

| Réglage des temps d’arrêt || Calcul du downtime || 0.5 | |||

|- | |||

| Chargement VM || 82 VMs : OS, code, 2 IP par VM || 0.1 | |||

|- | |||

! colspan=2 align="right"| '''Total''' !! 15 jours.homme | |||

|} | |||

=== Vérifications de stabilité (HA minimale) === | |||

{| class="wikitable" | |||

! Action !! Résultat attendu | |||

|- | |||

| Extinction d’un nœud || Tous les services redémarrent automatiquement sur les autres nœuds | |||

|- | |||

| Extinction / redémarrage simultané de tous les nœuds || Les services repartent correctement après reboot | |||

|} | |||

---- | |||

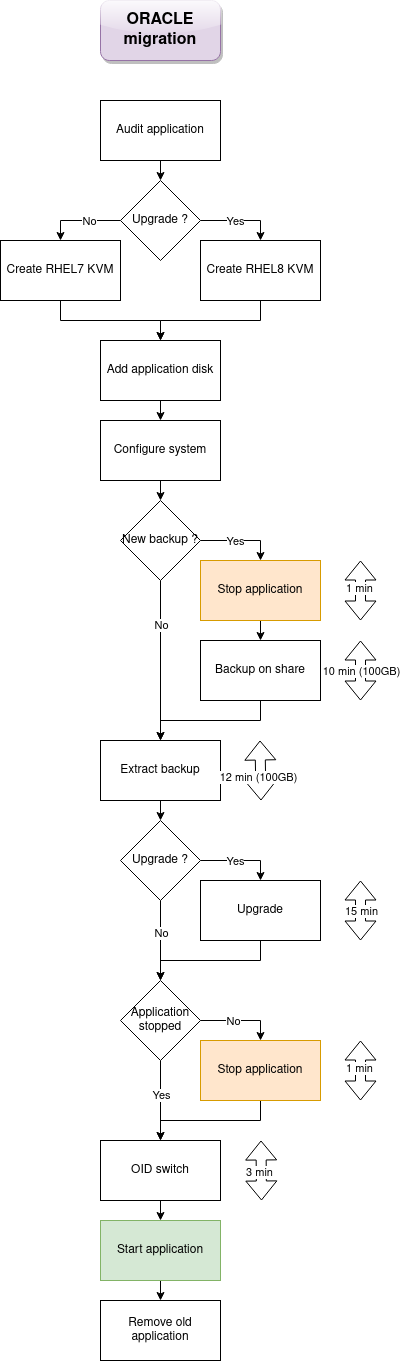

= Architecture web & bonnes pratiques = | |||

[[File:WebModelDiagram.drawio.png|400px|Reference web architecture]] | |||

Principes de conception : | |||

* privilégier une infrastructure '''simple, modulaire et flexible''', | |||

* rapprocher le contenu du client (GDNS ou équivalent), | |||

* utiliser des load balancers réseau (LVS, IPVS), | |||

* comparer les coûts et éviter le '''vendor lock-in''', | |||

* pour TLS : | |||

** '''HAProxy''' pour les frontends rapides, | |||

** '''Envoy''' pour les cas avancés (mTLS, HTTP/2/3), | |||

* pour le cache : | |||

** '''Varnish''', '''Apache Traffic Server''', | |||

* favoriser les stacks open-source, | |||

* utiliser files, buffers, queues et quotas pour lisser les pics. | |||

== Références == | |||

* [https://wikitech.wikimedia.org/wiki/Wikimedia_infrastructure Wikimedia infrastructure] | |||

* [https://github.com/systemdesign42/system-design System Design GitHub] | |||

---- | |||

= Comparatif des grandes plateformes cloud = | |||

{| class="wikitable" | |||

! Fonctionnalité !! Kubernetes !! OpenStack !! AWS !! Bare-metal !! HPC !! CRM !! oVirt | |||

|- | |||

| '''Outils de déploiement''' || Helm, YAML, ArgoCD, Juju || Ansible, Terraform, Juju || CloudFormation, Terraform, Juju || Ansible, Shell || xCAT, Clush || Ansible, Shell || Ansible, Python | |||

|- | |||

| '''Méthode de bootstrap''' || API || API, PXE || API || PXE, IPMI || PXE, IPMI || PXE, IPMI || PXE, API | |||

|- | |||

| '''Contrôle routeur''' || Kube-router || Router/Subnet API || Route Table / Subnet API || Linux, OVS || xCAT || Linux || API | |||

|- | |||

| '''Contrôle firewall''' || Istio, NetworkPolicy || Security Groups API || Security Group API || Linux firewall || Linux firewall || Linux firewall || API | |||

|- | |||

| '''Virtualisation réseau''' || VLAN, VxLAN || VPC || VPC || OVS, Linux || xCAT || Linux || API | |||

|- | |||

| '''DNS''' || CoreDNS || DNS-Nameserver || Route 53 || GDNS || xCAT || Linux || API | |||

|- | |||

| '''Load balancer''' || Kube-proxy, LVS || LVS || Network Load Balancer || LVS || SLURM || Ldirectord || N/A | |||

|- | |||

| '''Stockage''' || Local, cloud, PVC || Swift, Cinder, Nova || S3, EFS, EBS, FSx || Swift, XFS, EXT4, RAID10 || GPFS || SAN || NFS, SAN | |||

|} | |||

Cette table sert de point de départ pour choisir la bonne stack selon : | |||

* le niveau de contrôle souhaité, | |||

* le contexte (on-prem, cloud public, HPC…), | |||

* les outils d’automatisation existants. | |||

---- | |||

= Haute disponibilité, HPC & DevSecOps = | |||

== Haute disponibilité avec Corosync & Pacemaker == | |||

[[File:HA-REF.drawio.png|400px|HA cluster architecture]] | |||

Principes : | |||

* clusters multi-nœuds ou multi-sites, | |||

* fencing via IPMI, | |||

* provisioning PXE / NTP / DNS / TFTP, | |||

* pour 2 nœuds : attention au split-brain, | |||

* 3 nœuds ou plus recommandés en production. | |||

=== Ressources fréquentes === | |||

* multipath, LUNs, LVM, NFS, | |||

* processus applicatifs, | |||

* IP virtuelles, DNS, listeners réseau. | |||

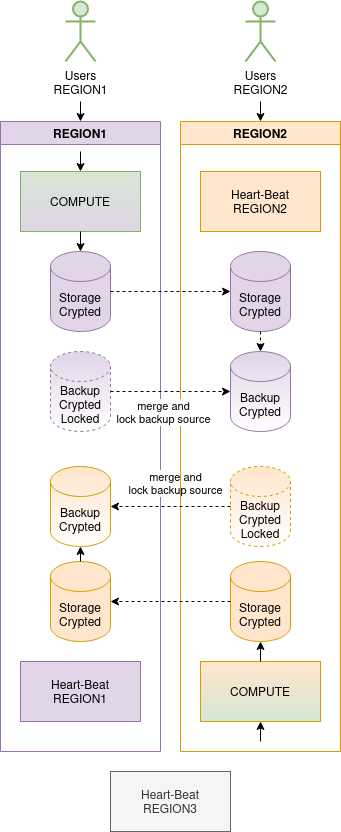

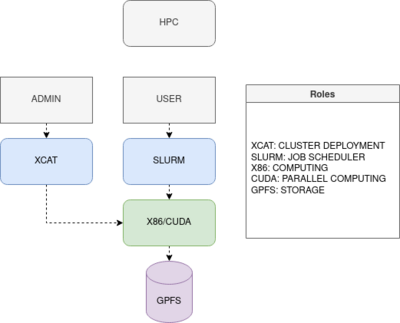

== HPC == | |||

[[File:HPC.drawio.png|400px|Overview of an HPC cluster]] | |||

* orchestration de jobs (SLURM ou équivalent), | |||

* stockage partagé haute performance, | |||

* intégration possible avec des workloads IA. | |||

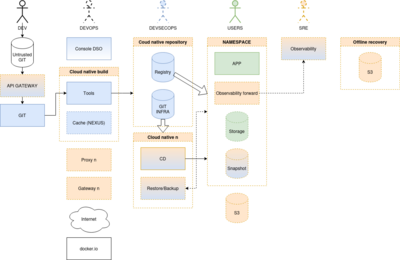

== DevSecOps == | |||

[[File:DSO-POC-V3.drawio.png|400px|DevSecOps reference design]] | |||

* CI/CD avec contrôles de sécurité intégrés, | |||

* observabilité dès la conception, | |||

* scans de vulnérabilité, | |||

* gestion des secrets, | |||

* policy-as-code. | |||

---- | |||

= News & trends = | |||

* [https://www.youtube.com/@lev-selector/videos Top AI News] | |||

* [https://betterprogramming.pub/color-your-captions-streamlining-live-transcriptions-with-diart-and-openais-whisper-6203350234ef Real-time transcription with Diart + Whisper] | |||

* [https://github.com/openai-translator/openai-translator OpenAI Translator] | |||

* [https://opensearch.org/docs/latest/search-plugins/conversational-search Opensearch with LLM] | |||

---- | |||

= Formation & apprentissage = | |||

* [https://www.youtube.com/watch?v=4Bdc55j80l8 Transformers Explained] | |||

* Labs, scripts et retours d’expérience concrets dans le projet Cloud Lab | |||

---- | |||

= Liens cloud & IT utiles = | |||

* [https://cloud.google.com/free/docs/aws-azure-gcp-service-comparison Cloud Providers Compared] | |||

* [https://global-internet-map-2021.telegeography.com/ Global Internet Topology Map] | |||

* [https://landscape.cncf.io/?fullscreen=yes CNCF Official Landscape] | |||

* [https://wikitech.wikimedia.org/wiki/Wikimedia_infrastructure Wikimedia Cloud Wiki] | |||

* [https://openapm.io OpenAPM] | |||

* [https://access.redhat.com/downloads/content/package-browser Red Hat Package Browser] | |||

* [https://www.silkhom.com/barometre-2021-des-tjm-dans-informatique-digital Baromètre TJM IT] | |||

* [https://www.glassdoor.fr/salaire/Hays-Salaires-E10166.htm Indicateurs salariaux IT] | |||

---- | |||

= Outils collaboratifs = | |||

== Dépôts de code == | |||

* [https://github.com/ynotopec GitHub ynotopec] | |||

== Base de connaissance == | |||

* ce wiki | |||

== | == Messagerie == | ||

* contact interne / support selon les projets | |||

== SSO == | |||

* [https://auth-lab.ailab.infocepo.com:wait-2026-06/auth Keycloak] | |||

* | |||

* | == MLflow == | ||

* [[MLFlow|MLFlow]] | |||

---- | |||

= À propos & contributions = | |||

Suggestions de corrections, améliorations de schémas, retours d’expérience ou nouveaux labs bienvenus. | |||

Ce wiki a vocation à rester un '''laboratoire vivant''' pour l’IA, le cloud et l’automatisation. | |||

Latest revision as of 22:07, 1 May 2026

infocepo.com – Cloud, AI & Labs

Bienvenue sur le portail infocepo.com.

Ce wiki documente l’écosystème Cloud, IA, automatisation et lab d’Infocepo. Il s’adresse aux :

- administrateurs systèmes,

- ingénieurs cloud,

- développeurs,

- étudiants,

- curieux qui veulent apprendre en pratiquant.

L’objectif est simple : transformer la théorie en scripts réutilisables, schémas, architectures, APIs et laboratoires concrets.

Accès rapide

Portail principal

Assistant IA

Liste des pages du wiki

Vue d’ensemble

Démarrer rapidement

Parcours recommandés

- 1. Construire un assistant IA privé

- Déployer une stack type Open WebUI + Ollama + GPU

- Ajouter un modèle de chat et un modèle de résumé

- Brancher des données internes via RAG + embeddings

- 2. Lancer un lab cloud

- Créer un petit cluster Kubernetes, OpenStack ou bare-metal

- Mettre en place un pipeline de déploiement (Helm, Ansible, Terraform…)

- Ajouter un service IA : transcription, résumé, chatbot, OCR…

- 3. Préparer un audit ou une migration

- Inventorier les serveurs avec ServerDiff.sh

- Concevoir l’architecture cible

- Automatiser la migration avec des scripts reproductibles

Vue d’ensemble du contenu

- Guides IA & outils : assistants, modèles, évaluation, GPU, RAG

- Cloud & infrastructure : Kubernetes, OpenStack, HA, HPC, DevSecOps

- Labs & scripts : audit, migration, automatisation

- Comparatifs : Kubernetes vs OpenStack vs AWS vs bare-metal, etc.

Vision

Le but à long terme est de construire un environnement où :

- les assistants IA privés accélèrent la production,

- les tâches répétitives sont automatisées,

- les déploiements sont industrialisés,

- l’infrastructure reste compréhensible, portable et réutilisable.

Catalogue rapide des services

| Catégorie | Service | Rôle |

|---|---|---|

| API | LLM | Modèles de chat, code, RAG, OCR |

| API | STT | Transcription audio |

| API | TTS | Synthèse vocale |

| API | realtime-ai | Temps réel WebSocket / WebRTC |

| API | IMAGE2TXT | OCR / VLM via endpoint dédié |

| API | summary | Résumé de textes longs |

| API | text2embeddings | Embeddings pour RAG |

| API | ChromaDB | Base vecteur |

| API | TXT2IMAGE | Génération d’images |

| API | diarization | Segmentation locuteurs |

| Observabilité | monitoring | Dashboards techniques |

| Observabilité | status | Disponibilité des services |

| Observabilité | web-stat | Statistiques web |

| Observabilité | LLM-stat | Vue API / usage |

| Outils | dataLab | Environnement de travail hors-production |

| Outils | realtime translation | Traduction |

| Outils | Demos | Démonstrateurs |

Nouveautés

Nouveautés 26/04/2026

- ajout de TTS Omnivoice : Qualité TTS augmenté et ajout plus global des langues (600)

- ajout de lightRAG : LightRAG est un framework RAG avancé et léger qui combine graphs de connaissances et recherche vectorielle pour une analyse contextuelle profonde et efficace.

- ajout de API reranker

- ajout de API embedding

- privacy-filter : filtrage données personnelles

- Un seul fichier CLAUDE.md inspiré d’Andrej Karpathy pour transformer Claude en un vrai ingénieur logiciel.

- Ajout de qwen3.6 : Qwen3.6 delivers substantial upgrades in agentic coding and thinking preservation than previous Qwen models.

- Hermes Agent : l’agent qui s’améliore et grandit avec toi.

- gemma4 STT : API de transcription compatible OpenAI. La qualité est très bonne. Il faut comparer avec Whisper3-turbo. Il est plus gourmant en mémoire. Il ne retourne pas de "timestamp" "sentence".

- qwen3 STT : API de transcription compatible OpenAI. La qualité est moins bonne en français que Whisper3-turbo. Mais il faudrait tester avec d'autres langues. Il peut théoriquement prendre beaucoup de charge avec le backend actuel vLLM.

- cohere STT : premiers tests non convainquants. Certainement pertinent dans la transcription monolangue, mais non adapté au multilangue. Il faut définir la langue avant transcription. Il ne retourne pas de "timestamp" "sentence".

- opencode : CLI coder à comparer avec Aider / OpenHands.

- DGX Spark : architecture CPU ARM.

- Ajout de api-convert2md : extraction de tableaux pour RAG compatible Open WebUI.

- Mise à jour des paramètres RAG optimisation.

- Ajout de experimental brains.

- Ajout de legal-agent.

- Ajout de ai-security.

- Ajout de langextract : démo extraction d’entités.

- Ajout de sam-audio : séparation audio sémantique.

- Ajout de API Realtime : WebRTC / WebSocket bidirectionnel basse latence.

Priorités

Top tasks

- Ajouter Presidio : anonymisation / masquage PII, socle RGPD.

- Ajouter llm-d : blueprints + charts Kubernetes pour industrialiser les déploiements.

- Ajouter Dynamo : orchestration inférence multi-nœuds.

- Ajouter GuideLLM : capacity planning / benchmark réaliste.

- Ajouter NeMo Guardrails : garde-fous et politiques.

Backlog / veille

- OPENRAG > implement / evaluate / add OIDC

- short audio transcription

- translation latency > api-realtime-ai

- RAG sur PDF avec images

- compatibilité Open WebUI avec Agentic RAG

- scalability

- security > ai-security / NeMo Guardrails

- openclaw

- faster-whisper mutualisé

- API classificateur IA

- API résumé mutualisée

- API KV (LDAP user / group)

- API NER

- parsing structuré docs : granite-docling + meilisearch

- Temporal pour workflows critiques

- appwrite

- semantic-router

- Shannon

- Qwen3-ASR-1.7B

- Youtu-VL-4B-Instruct

- Step3-VL-10B

- Qwen3-TTS-12Hz-1.7B-CustomVoice

- chatterbox

- deepset-ai/haystack

- meilisearch

- granite-docling-258M

- Airbyte

- aider

- continue

- OpenHands

- N8N

- API Compressor

- LightRAG

- Qwen3-Omni-30B-A3B-Instruct

- Metabase

- browser-use

- MCP LLM

- Dify

- Rasa

- supabase

- mem0

- DeepResearch

- AppFlowy

- dx8152/Qwen-Edit-2509-Multiple-angles

Assistants IA & outils cloud

Assistants IA

- ChatGPT

- ChatGPT – Assistant conversationnel public, utile pour exploration, rédaction, expérimentation rapide.

- Assistants IA auto-hébergés

- Open WebUI + Ollama + GPU

- Stack typique pour assistant privé, API OpenAI-compatible et expérimentation locale.

- Outil de résumé local, rapide et hors ligne.

Développement, modèles & veille

- Découverte de modèles

- Évaluation & benchmarks

- Outils de développement & fine-tuning

Matériel IA & GPU

- NVIDIA GH200

- DGX Spark

- GROQ LLM accelerator

API Realtime AI (DEV)

Statut : environnement DEV, remplaçante prévue de l’API OpenAI pour les cas temps réel.

Configuration

| Variable | Valeur |

|---|---|

| OPENAI_API_BASE | wss://api-realtime-ai.ailab.infocepo.com:wait-2026-06/v1

|

| OPENAI_API_KEY | sk-XXXXX

|

Dépôt GitHub

Page de test

external-test/half-duplex.html— annulation d’écho + mode half-duplex.

Compatibilité

Remplacer l’URL OpenAI par $OPENAI_API_BASE pour tester compatibilité et performances.

API LLM (OpenAI compatible)

- URL de base :

https://api.ailab.infocepo.com:wait-2026-06/v1 - Création du token : OPENAI_API_KEY

- Documentation : Documentation API

Liste des modèles

curl -X GET \ 'https://api.ailab.infocepo.com:wait-2026-06/v1/models' \ -H 'Authorization: Bearer sk-XXXXX' \ -H 'accept: application/json' \ | jq | sed -rn 's#^.*id.*: "(.*)".*$#* \1#p' | sort -u

Modèles ouverts & endpoints internes

Dernière mise à jour : 2026-04-20

Les modèles ci-dessous correspondent à des endpoints logiques exposés derrière une passerelle.

| Endpoint | Description / usage principal |

|---|---|

| ai-multilingual | qwen3.6 fp8 en mode nothink – multilingual |

| ai-tools | qwen3.6 fp8 – tâches agentiques et outils |

| ai-thinking | qwen3.6 fp8 – thinking |

| ai-vision | qwen3.6 fp8 en mode nothink – vision/OCR |

| ai-embedding | bge-m3 – recherche sémantique |

| ai-stt | whisper3-turbo – transcription vocale multilingual |

| ai-tts | Kokoro-82M – TTS multilingual limité (actuel, internal dev) |

| ai-tts-next | OmniVoice – TTS multilingual en évaluation |

| ai-image | OpenDalle – image génération |

Exemple bash

export OPENAI_API_MODEL="ai-chat"

export OPENAI_API_BASE="https://api.ailab.infocepo.com:wait-2026-06/v1"

export OPENAI_API_KEY="sk-XXXXX"

promptValue="Quel est ton nom ?"

jsonValue='{

"model": "'${OPENAI_API_MODEL}'",

"messages": [{"role": "user", "content": "'${promptValue}'"}],

"temperature": 0

}'

curl -k ${OPENAI_API_BASE}/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d "${jsonValue}" 2>/dev/null | jq '.choices[0].message.content'

Vue infra LLM

DEV (au choix)

- A.

LiteLLM → vLLM/SgLang: tests perf / compatibilité - B.

LiteLLM → Ollama: simple, rapide à itérer - C.

Ollamadirect : POC ultra-léger

DEV – modèle FR / résumé

LiteLLM → Ollama /v1

PROD

- Standard :

LiteLLM → vLLM/SgLang - Pont DEV→PROD :

LiteLLM (DEV) → LiteLLM (PROD) → vLLM/SgLang

Notes :

- LiteLLM = passerelle unique (clés, quotas, logs)

- vLLM/SgLang = performance / stabilité en charge

- Ollama = simplicité de prototypage

API Image to Text

- Utilise l’API LLM avec un endpoint adapté à l’OCR / VLM.

- Modèle recommandé :

ai-vision

Exemple bash

OPENAI_API_KEY=sk-XXXXX

base64 -w0 "/path/to/image.png" > img.b64

jq -n --rawfile img img.b64 \

'{

model: "ai-vision",

messages: [

{

role: "user",

content: [

{ "type": "text", "text": "Décris cette image." },

{

"type": "image_url",

"image_url": { "url": ("data:image/png;base64," + ($img | rtrimstr("\n"))) }

}

]

}

]

}' > payload.json

curl https://api.ailab.infocepo.com:wait-2026-06/v1/chat/completions \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

--data-binary @payload.json

Exemple Python

import base64

import json

import requests

import os

API_KEY = os.getenv("OPENAI_API_KEY")

MODEL = "ai-vision"

IMG_PATH = "/path/to/image.png"

API_URL = "https://api.ailab.infocepo.com:wait-2026-06/v1/chat/completions"

with open(IMG_PATH, "rb") as f:

img_b64 = base64.b64encode(f.read()).decode("utf-8")

payload = {

"model": MODEL,

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": "Décris cette image."},

{

"type": "image_url",

"image_url": {"url": f"data:image/png;base64,{img_b64}"}

}

]

}

]

}

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

response = requests.post(API_URL, headers=headers, data=json.dumps(payload))

if response.ok:

print(json.dumps(response.json(), indent=2, ensure_ascii=False))

else:

print(f"Erreur {response.status_code}: {response.text}")

API STT

- URL :

https://api-audio2txt.ailab.infocepo.com/v1 - Clé :

OPENAI_API_KEY=sk-XXXXX - Modèle :

whisper-1 - Documentation : API STT docs

Exemple Python

import requests

OPENAI_API_KEY = 'sk-XXXXX'

url = 'https://api-audio2txt.ailab.infocepo.com/v1/audio/transcriptions'

headers = {

'Authorization': f'Bearer {OPENAI_API_KEY}',

}

files = {

'file': ('file.opus', open('/path/to/file.opus', 'rb')),

'model': (None, 'whisper-1')

}

response = requests.post(url, headers=headers, files=files)

print(response.json())

Exemple curl

[ ! -f /tmp/test.ogg ] && wget "https://upload.wikimedia.org/wikipedia/commons/1/17/Fables_de_La_Fontaine_Livre_1_01.ogg" -O /tmp/test.ogg export OPENAI_API_KEY=sk-XXXXX curl https://api-audio2txt.ailab.infocepo.com/v1/audio/transcriptions \ -H "Authorization: Bearer $OPENAI_API_KEY" \ -F model="whisper-1" \ -F file="@/tmp/test.ogg"

Notes

- Plusieurs formats audio sont acceptés.

- Le flux final est normalisé en 16 kHz mono.

- Pour une qualité optimale : privilégier OPUS 16 kHz mono.

UI

API TTS

- URL :

https://api-txt2audio.ailab.infocepo.com/v1 - Clé :

OPENAI_API_KEY=sk-XXXXX - Documentation : API TTS docs

Exemple

export OPENAI_API_KEY=sk-XXXXX

curl https://api-txt2audio.ailab.infocepo.com/v1/audio/speech \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-4o-mini-tts",

"input": "Bonjour, ceci est un test de synthèse vocale.",

"voice": "coral",

"instructions": "Speak in a cheerful and positive tone.",

"response_format": "opus"

}' | ffplay -i -

API Text to Image

- URL :

https://api-txt2image.ailab.infocepo.com/v1 - Clé API :

OPENAI_API_KEY=sk-... - Documentation : API TXT2IMAGE docs

Exemple

export OPENAI_API_KEY=EMPTY

curl https://api-txt2image.ailab.infocepo.com/v1/images/generations \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d '{

"prompt": "a photo of a happy corgi puppy sitting and facing forward, studio light, longshot",

"n": 1,

"size": "1024x1024"

}'

API Diarization

- Documentation : API Diarization docs

Exemple

wget "https://upload.wikimedia.org/wikipedia/commons/6/60/Mike_Peters_on_Politics_and_Emotion_%28Interview_1984%29.mp3" -O /tmp/test.mp3 curl -X POST "https://api-diarization.ailab.infocepo.com/upload-audio/" \ -H "Authorization: Bearer token1" \ -F "file=@/tmp/test.mp3"

API Summary

- Documentation : API Summary docs

Exemple

text="The tower is 324 metres tall and is one of the most recognizable monuments in the world."

json_payload=$(jq -nc --arg text "$text" '{"text": $text}')

curl -X POST https://api-summary.ailab.infocepo.com:wait-2026-06/summary/ \

-H "Content-Type: application/json" \

-d "$json_payload"

API Text Embeddings

- URL :

https://text-embeddings.ailab.infocepo.com:wait-2026-06 - Documentation : Documentation

Exemple

curl -k https://text-embeddings.ailab.infocepo.com:wait-2026-06/embed \

-X POST \

-d '{"inputs":"What is Deep Learning?"}' \

-H 'Content-Type: application/json'

API DB Vectors (ChromaDB)

Production

- URL :

https://chromadb.ailab.infocepo.com:wait-2026-06 - Token :

XXXXX

Lab

export CHROMA_HOST=https://chromadb.c1.ailab.infocepo.com:wait-2026-06 export CHROMA_PORT=443 export CHROMA_TOKEN=XXXX

Exemple curl

curl -v "${CHROMA_HOST}"/api/v1/collections \

-H "Authorization: Bearer ${CHROMA_TOKEN}"

Exemple Python

import chromadb

from chromadb.config import Settings

def chroma_http(host, port=80, token=None):

return chromadb.HttpClient(

host=host,

port=port,

ssl=host.startswith('https') or port == 443,

settings=(

Settings(

chroma_client_auth_provider='chromadb.auth.token.TokenAuthClientProvider',

chroma_client_auth_credentials=token,

) if token else Settings()

)

)

client = chroma_http(CHROMA_HOST, CHROMA_PORT, CHROMA_TOKEN)

collections = client.list_collections()

print(collections)

Déployer sa propre instance

export nameSpace=your_namespace

domainRoot=ailab.infocepo.com

helm repo add chroma https://amikos-tech.github.io/chromadb-chart/

helm repo update

helm upgrade --install chromadb chroma/chromadb -n ${nameSpace} \

--set chromadb.apiVersion="0.4.24" \

--set ingress.enabled=true \

--set ingress.hosts[0].host="${nameSpace}-chromadb.${domainRoot}" \

--set ingress.hosts[0].paths[0].path=/ \

--set ingress.hosts[0].paths[0].pathType=ImplementationSpecific \

--set ingress.annotations."cert-manager\.io/cluster-issuer"=letsencrypt-prod \

--set ingress.tls[0].secretName=${nameSpace}-chromadb.${domainRoot}-tls \

--set ingress.tls[0].hosts[0]="${nameSpace}-chromadb.${domainRoot}"

kubectl -n ${nameSpace} patch ingress/chromadb --type=json \

-p '[{"op":"add","path":"/metadata/annotations/nginx.ingress.kubernetes.io~1proxy-body-size","value":"0"}]'

Récupérer le token

kubectl --namespace ${nameSpace} get secret chromadb-auth \

-o jsonpath="{.data.token}" | base64 --decode && echo

Registry

- URL : registry.ailab.infocepo.com:wait-2026-06

- Login :

user - Password :

XXXXX

Exemple

curl -u "user:XXXXX" https://registry.ailab.infocepo.com:wait-2026-06/v2/_catalog

Exemple K8S

deploymentName=

nameSpace=

kubectl -n ${nameSpace} create secret docker-registry pull-secret \

--docker-server=registry.ailab.infocepo.com:wait-2026-06 \

--docker-username=user \

--docker-password=XXXXX \

--docker-email=contact@example.com

kubectl -n ${nameSpace} patch deployment ${deploymentName} \

-p '{"spec":{"template":{"spec":{"imagePullSecrets":[{"name":"pull-secret"}]}}}}'

Stockage objet externe (S3)

- Endpoint :

https://s3.ailab.infocepo.com:wait-2026-06 - Access key :

XXXX - Secret key :

XXXX

Un bucket nommé ORG a été créé pour stocker des documents de démonstration.

RAG optimisation

- Embeddings :

BAAI/bge-m3 chunk_size=1200chunk_overlap=100- LLM :

qwen3.6 - Pour les PDF mixtes : PDF → image → OCR / VLM peut améliorer les résultats.

Processus usine IA

| Étape | Description | Outils utilisés | Responsable(s) |

|---|---|---|---|

| 1 | Idée | - | Équipe projet |

| 2 | Développement | Environnement Onyxia / lab | Équipe projet |

| 3 | Déploiement | CI/CD, GitHub, Kubernetes | Équipe DevOps |

| 4 | Surveillance | Uptime-Kuma, dashboards | Équipe DevOps |

| 5 | Alertes | Mattermost | Équipe DevOps |

| 6 | Support infrastructure | - | Équipe SRE |

| 7 | Support applicatif | - | Équipe applicative |

Environnements

Hors production

- Utiliser datalab

- Support : canal Mattermost Offre IA

- Le pseudo utilisateur doit respecter la convention interne

- Demander si besoin un accès Linux + Kubernetes

Production (best-effort)

- Publier le code applicatif, les secrets (format SOPS), le Dockerfile et le code infra (Helm ou manifests K8S) sur Git

- Demander un namespace

- Lire la documentation de surveillance associée

Limites de l’infrastructure

- Les charges GPU sont intentionnellement limitées en journée.

Cloud Lab & projets d’audit

Le Cloud Lab fournit des scénarios reproductibles : audit d’infrastructure, migration cloud, automatisation, haute disponibilité.

Projet d’audit

Script Bash d’audit permettant de :

- détecter les dérives de configuration,

- comparer plusieurs environnements,

- préparer un plan de migration ou de remédiation.

Exemple de migration cloud

| Tâche | Description | Durée (jours) |

|---|---|---|

| Audit infrastructure | 82 services, audit automatisé via ServerDiff.sh | 1.5 |

| Diagramme d’architecture | Conception visuelle et documentation | 1.5 |

| Contrôles de conformité | 2 clouds, 6 hyperviseurs, 6 To RAM | 1.5 |

| Installation plateforme cloud | Déploiement des environnements cibles | 1.0 |

| Vérification de stabilité | Premiers tests fonctionnels | 0.5 |

| Étude d’automatisation | Identification des tâches répétitives | 1.5 |

| Développement des templates | 6 templates, 8 environnements, 2 clouds / OS | 1.5 |

| Diagramme de migration | Illustration du processus | 1.0 |

| Écriture du code de migration | 138 lignes (voir MigrationApp.sh) | 1.5 |

| Stabilisation | Validation de la reproductibilité | 1.5 |

| Benchmark cloud | Comparaison vs legacy | 1.5 |

| Réglage des temps d’arrêt | Calcul du downtime | 0.5 |

| Chargement VM | 82 VMs : OS, code, 2 IP par VM | 0.1 |

| Total | 15 jours.homme | |

Vérifications de stabilité (HA minimale)

| Action | Résultat attendu |

|---|---|

| Extinction d’un nœud | Tous les services redémarrent automatiquement sur les autres nœuds |

| Extinction / redémarrage simultané de tous les nœuds | Les services repartent correctement après reboot |

Architecture web & bonnes pratiques

Principes de conception :

- privilégier une infrastructure simple, modulaire et flexible,

- rapprocher le contenu du client (GDNS ou équivalent),

- utiliser des load balancers réseau (LVS, IPVS),

- comparer les coûts et éviter le vendor lock-in,

- pour TLS :

- HAProxy pour les frontends rapides,

- Envoy pour les cas avancés (mTLS, HTTP/2/3),

- pour le cache :

- Varnish, Apache Traffic Server,

- favoriser les stacks open-source,

- utiliser files, buffers, queues et quotas pour lisser les pics.

Références

Comparatif des grandes plateformes cloud

| Fonctionnalité | Kubernetes | OpenStack | AWS | Bare-metal | HPC | CRM | oVirt |

|---|---|---|---|---|---|---|---|

| Outils de déploiement | Helm, YAML, ArgoCD, Juju | Ansible, Terraform, Juju | CloudFormation, Terraform, Juju | Ansible, Shell | xCAT, Clush | Ansible, Shell | Ansible, Python |

| Méthode de bootstrap | API | API, PXE | API | PXE, IPMI | PXE, IPMI | PXE, IPMI | PXE, API |

| Contrôle routeur | Kube-router | Router/Subnet API | Route Table / Subnet API | Linux, OVS | xCAT | Linux | API |

| Contrôle firewall | Istio, NetworkPolicy | Security Groups API | Security Group API | Linux firewall | Linux firewall | Linux firewall | API |

| Virtualisation réseau | VLAN, VxLAN | VPC | VPC | OVS, Linux | xCAT | Linux | API |

| DNS | CoreDNS | DNS-Nameserver | Route 53 | GDNS | xCAT | Linux | API |

| Load balancer | Kube-proxy, LVS | LVS | Network Load Balancer | LVS | SLURM | Ldirectord | N/A |

| Stockage | Local, cloud, PVC | Swift, Cinder, Nova | S3, EFS, EBS, FSx | Swift, XFS, EXT4, RAID10 | GPFS | SAN | NFS, SAN |

Cette table sert de point de départ pour choisir la bonne stack selon :

- le niveau de contrôle souhaité,

- le contexte (on-prem, cloud public, HPC…),

- les outils d’automatisation existants.

Haute disponibilité, HPC & DevSecOps

Haute disponibilité avec Corosync & Pacemaker

Principes :

- clusters multi-nœuds ou multi-sites,

- fencing via IPMI,

- provisioning PXE / NTP / DNS / TFTP,

- pour 2 nœuds : attention au split-brain,

- 3 nœuds ou plus recommandés en production.

Ressources fréquentes

- multipath, LUNs, LVM, NFS,

- processus applicatifs,

- IP virtuelles, DNS, listeners réseau.

HPC

- orchestration de jobs (SLURM ou équivalent),

- stockage partagé haute performance,

- intégration possible avec des workloads IA.

DevSecOps

- CI/CD avec contrôles de sécurité intégrés,

- observabilité dès la conception,

- scans de vulnérabilité,

- gestion des secrets,

- policy-as-code.

News & trends

Formation & apprentissage

- Transformers Explained

- Labs, scripts et retours d’expérience concrets dans le projet Cloud Lab

Liens cloud & IT utiles

- Cloud Providers Compared

- Global Internet Topology Map

- CNCF Official Landscape

- Wikimedia Cloud Wiki

- OpenAPM

- Red Hat Package Browser

- Baromètre TJM IT

- Indicateurs salariaux IT

Outils collaboratifs

Dépôts de code

Base de connaissance

- ce wiki

Messagerie

- contact interne / support selon les projets

SSO

MLflow

À propos & contributions

Suggestions de corrections, améliorations de schémas, retours d’expérience ou nouveaux labs bienvenus.

Ce wiki a vocation à rester un laboratoire vivant pour l’IA, le cloud et l’automatisation.