Main Page: Difference between revisions

Jump to navigation

Jump to search

(→NEWS) |

|||

| (20 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

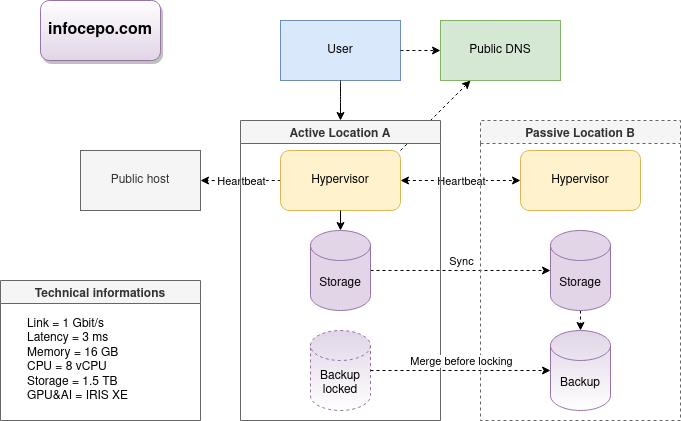

[[File: | [[File:Infocepo-illustration.jpg|thumb|right]] | ||

'''Discover cloud computing on infocepo.com''': | '''Discover cloud computing on infocepo.com''': | ||

* Master cloud infrastructure | * Master cloud infrastructure | ||

| Line 11: | Line 11: | ||

== AI Tools == | == AI Tools == | ||

*[https://chat.openai.com ChatGPT4] - public assistant with learning abilities. | *[https://chat.openai.com ChatGPT4] - public assistant with learning abilities. | ||

*[https:// | *[https://github.com/open-webui/open-webui open-webui] + [https://www.scaleway.com/en/h100-pcie-try-it-now/ GPU H100] + [https://ollama.com Ollama] - private assistant and API. | ||

*[https://github.com/ynotopec/summarize private summary] | *[https://github.com/ynotopec/summarize private summary] | ||

=== DEV === | === DEV === | ||

(22/03/2024) | |||

*[https://github.com/hiyouga/LLaMA-Factory LLM Fine Tuning] | |||

*[https://huggingface.co/models Models Trending] | *[https://huggingface.co/models Models Trending] | ||

*[https://github.com/trending Project Trending] | *[https://github.com/trending Project Trending] | ||

| Line 27: | Line 28: | ||

*[https://github.com/chatchat-space/Langchain-Chatchat Chatchat] - private assistant with RAG capabilities but Chinese language. | *[https://github.com/chatchat-space/Langchain-Chatchat Chatchat] - private assistant with RAG capabilities but Chinese language. | ||

*[https://www.nvidia.com/en-us/data-center/h200 NVIDIA H200] - KUBERNETES or HPC clusters for DATASCIENCE. | *[https://www.nvidia.com/en-us/data-center/h200 NVIDIA H200] - KUBERNETES or HPC clusters for DATASCIENCE. | ||

*[https://www.nvidia.com/fr-fr/geforce/graphics-cards/40-series/rtx- | *[https://www.nvidia.com/fr-fr/geforce/graphics-cards/40-series/rtx-4080-family NVIDIA 4080] - GPU card for private assistance. | ||

==== INTERESTING LLMs ( | ==== INTERESTING LLMs ==== | ||

(22/03/2024) | |||

* Vicuna-33B (private assistant) | * Vicuna-33B (private assistant) | ||

* | * Qwen-14B (32k, RAG) | ||

* Vicuna-7B (summary) | * Vicuna-7B (summary) | ||

=== NEWS === | === NEWS === | ||

* | (04/05/2024) | ||

* LLM [https:// | * [https://www.youtube.com/@lev-selector/videos Very good AI News] | ||

* LLM [https://ollama.com/library/llama3-gradient llama3-gradient 1M] for long context available | |||

* For the [https://betterprogramming.pub/color-your-captions-streamlining-live-transcriptions-with-diart-and-openais-whisper-6203350234ef '''transcription'''] real time with Diart it is possible to follow the interlocutors | * For the [https://betterprogramming.pub/color-your-captions-streamlining-live-transcriptions-with-diart-and-openais-whisper-6203350234ef '''transcription'''] real time with Diart it is possible to follow the interlocutors | ||

* [https://github.com/openai-translator/openai-translator translation] tools like Google translate are becoming popular | * [https://github.com/openai-translator/openai-translator translation] tools like Google translate are becoming popular | ||

* [https://www.mouser.fr/ProductDetail/BittWare/RS-GQ-GC1-0109?qs=ST9lo4GX8V2eGrFMeVQmFw%3D%3D '''LLM 10x accelerator'''] and cheaper with GROQ | |||

* [https://www.mouser.fr/ProductDetail/BittWare/RS-GQ-GC1-0109?qs=ST9lo4GX8V2eGrFMeVQmFw%3D%3D '''LLM 10x accelerator'''] and cheaper with GROQ | * [https://opensearch.org/docs/latest/search-plugins/conversational-search Opensearch with LLM] | ||

* [https://opensearch.org/docs/latest/search-plugins/conversational-search Opensearch with | * ACCEL : vision IA chip very efficient and powerful. | ||

* IBM NorthPole : an IA chip very efficient and powerful. | |||

*ACCEL : vision IA chip very efficient and powerful. | |||

*IBM NorthPole : an IA chip very efficient and powerful. | |||

=== TRAINING === | === TRAINING === | ||

*[https://www.youtube.com/watch?v=4Bdc55j80l8 TRANSFORMERS ALGORITHM] | *[https://www.youtube.com/watch?v=4Bdc55j80l8 TRANSFORMERS ALGORITHM] | ||

== CLOUD LAB == | == CLOUD LAB == | ||

| Line 129: | Line 119: | ||

* Use open source STACKs where possible. | * Use open source STACKs where possible. | ||

* Employ database caches like MEMCACHED. | * Employ database caches like MEMCACHED. | ||

* Use queues | * Use queues for long batch. | ||

* Use buffers for stability of real streams. | |||

* More information at [https://wikitech.wikimedia.org/wiki/Wikimedia_infrastructure CLOUD WIKIPEDIA] and [https://github.com/systemdesign42/system-design GITHUB]. | * More information at [https://wikitech.wikimedia.org/wiki/Wikimedia_infrastructure CLOUD WIKIPEDIA] and [https://github.com/systemdesign42/system-design GITHUB]. | ||

| Line 252: | Line 243: | ||

*Process (Process resource) | *Process (Process resource) | ||

*Listener (Listener resource) | *Listener (Listener resource) | ||

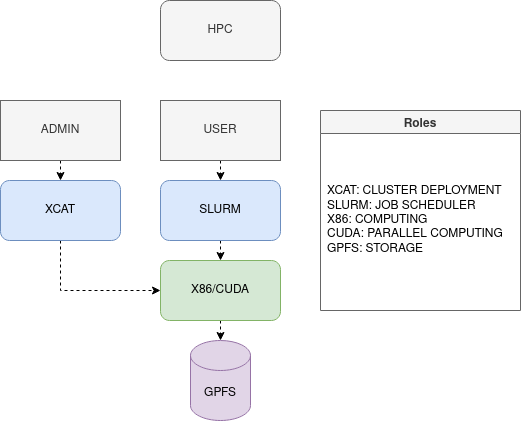

== HPC == | |||

[[File:HPC.drawio.png]] | |||

== IT wage == | == IT wage == | ||

*[http://jobsearchtech.about.com/od/educationfortechcareers/tp/HighestCerts.htm Best IT certifications] | *[http://jobsearchtech.about.com/od/educationfortechcareers/tp/HighestCerts.htm Best IT certifications] | ||

*[https://www.silkhom.com/barometre-2021-des-tjm-dans-informatique-digital | *[https://www.silkhom.com/barometre-2021-des-tjm-dans-informatique-digital FREELANCE] | ||

*[http://www.journaldunet.com/solutions/emploi-rh/salaire-dans-l-informatique-hays | *[http://www.journaldunet.com/solutions/emploi-rh/salaire-dans-l-informatique-hays IT] | ||

== SRE == | == SRE == | ||

Revision as of 02:55, 4 May 2024

Discover cloud computing on infocepo.com:

- Master cloud infrastructure

- Explore AI

- Compare Kubernetes and AWS

- Advance your IT skills with hands-on labs and open-source software.

Start your journey to expertise.

AI Tools

- ChatGPT4 - public assistant with learning abilities.

- open-webui + GPU H100 + Ollama - private assistant and API.

- private summary

DEV

(22/03/2024)

- LLM Fine Tuning

- Models Trending

- Project Trending

- ChatBot Evaluate

- LLM Ranking

- Embeddings Ranking

- Image Evaluate

- Perplexity AI - R&D

- CogVLM - Private API for multimodal purposes. Usable with RAG.

- Vectors DB Ranking

- Chatchat - private assistant with RAG capabilities but Chinese language.

- NVIDIA H200 - KUBERNETES or HPC clusters for DATASCIENCE.

- NVIDIA 4080 - GPU card for private assistance.

INTERESTING LLMs

(22/03/2024)

- Vicuna-33B (private assistant)

- Qwen-14B (32k, RAG)

- Vicuna-7B (summary)

NEWS

(04/05/2024)

- Very good AI News

- LLM llama3-gradient 1M for long context available

- For the transcription real time with Diart it is possible to follow the interlocutors

- translation tools like Google translate are becoming popular

- LLM 10x accelerator and cheaper with GROQ

- Opensearch with LLM

- ACCEL : vision IA chip very efficient and powerful.

- IBM NorthPole : an IA chip very efficient and powerful.

TRAINING

CLOUD LAB

Presenting my LAB project.

CLOUD Audit

Created ServerDiff.sh for server audits. Enables configuration drift tracking and environment consistency checks.

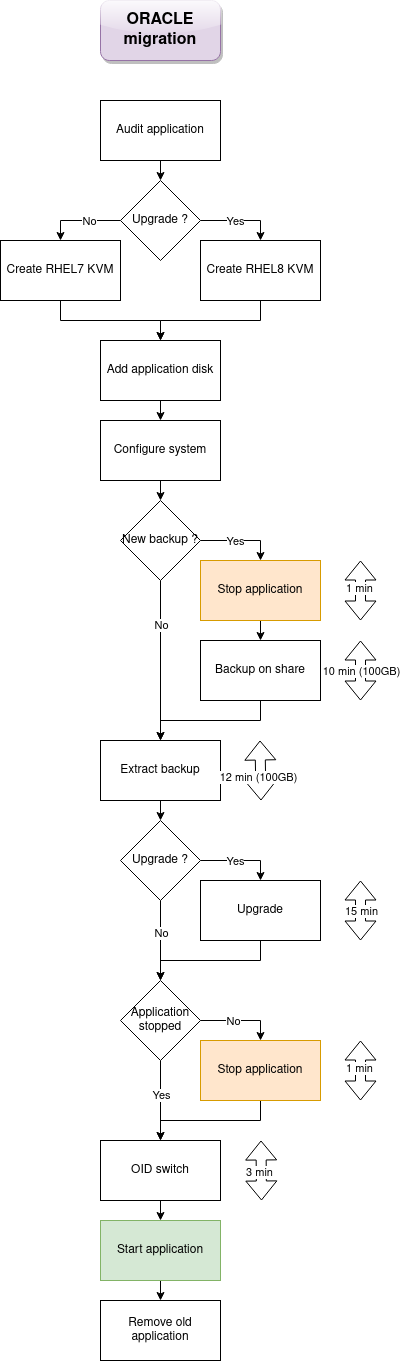

CLOUD Migration Example

- 1.5d: Infrastructure audit of 82 services (ServerDiff.sh)

- 1.5d: Create cloud architecture diagram

- 1.5d: Compliance check of 2 clouds (6 hypervisors, 6TB memory)

- 1d: Cloud installations

- .5d: Stability check

| ACTION | RESULT | OK/KO |

| Activate maintenance for n/2-1 nodes or 1 node if 2 nodes. | All resources are started. | |

| Un-maintenance all nodes. Power off n/2-1 nodes or 1 node if 2 nodes, different from the previous test. | All resources are started. | |

| Power off simultaneous all nodes. Power on simultaneous all nodes. | All resources are started. |

- 1.5d: Cloud automation study

- 1.5d: Develop 6 templates (2 clouds, 2 OS, 8 environments, 2 versions)

- 1d: Create migration diagram

- 1.5d: Write 138 lines of migration code (MigrationApp.sh)

- 1.5d: Process stabilization

- 1.5d: Cloud vs old infrastructure benchmark

- .5d: Unavailability time calibration per migration unit

- 5min: Load 82 VMs (env, os, application_code, 2 IP)

Total = 15 man-days

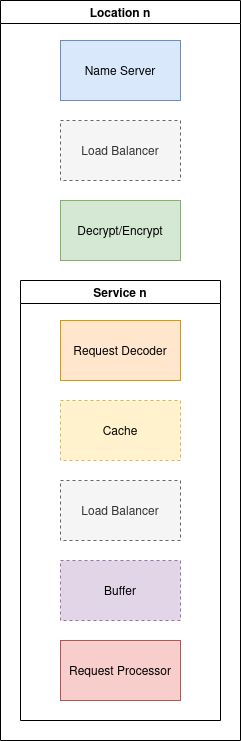

WEB Enhancement

- Formalize infrastructure for flexibility and reduced complexity.

- Utilize customer-location tracking name server like GDNS.

- Use minimal instances with a network load balancer like LVS.

- Compare prices of dynamic computing services, beware of tech lock-in.

- Employ efficient frontend TLS decoder like HAPROXY.

- Opt for fast HTTP cache like VARNISH and Apache Traffic Server for large files.

- Use PROXY with TLS decoder like ENVOY for service compatibility.

- Consider serverless service for standard runtimes, mindful of potential incompatibilities.

- Employ load balancing or native services for dynamic computing power.

- Use open source STACKs where possible.

- Employ database caches like MEMCACHED.

- Use queues for long batch.

- Use buffers for stability of real streams.

- More information at CLOUD WIKIPEDIA and GITHUB.

CLOUD WIKIPEDIA

CLOUD vs HW

| Function | Kubernetes | OpenStack | AWS | Bare-metal | HPC | CRM | oVirt |

|---|---|---|---|---|---|---|---|

| Deployment Tools (Tools used for deployment) |

Helm, YAML, Operator, Ansible, Juju, ArgoCD | Ansible, Packer, Terraform, Juju | Ansible, Terraform, CloudFormation, Juju | Ansible, Shell Scripts | xCAT, Clush | Ansible, Shell Scripts | Ansible, Python, Shell Scripts |

| Bootstrap Method (Initial configuration and setup) |

API | API, PXE | API | PXE, IPMI | PXE, IPMI | PXE, IPMI | PXE, API |

| Router Control (Routing services) |

API (Kube-router) | API (Router/Subnet) | API (Route Table/Subnet) | Linux, OVS, External Hardware | xCAT, External Hardware | Linux, External Hardware | API |

| Firewall Control (Firewall rules and policies) |

Ingress, Egress, Istio, NetworkPolicy | API (Security Groups) | API (Security Group) | Linux Firewall | Linux Firewall | Linux Firewall | API |

| Network Virtualization (VLAN/VxLAN technologies) |

Multiple Options | VPC | VPC | OVS, Linux, External Hardware | xCAT, External Hardware | Linux, External Hardware | API |

| Name Server Control (DNS services) |

CoreDNS | DNS-Nameserver | Amazon Route 53 | GDNS | xCAT | Linux, External Hardware | API, External Hardware |

| Load Balancer (Load balancing options) |

Kube-proxy, LVS (IPVS) | LVS | Network Load Balancer | LVS | SLURM | Ldirectord | N/A |

| Storage Options (Available storage technologies) |

Multiple Options | Swift, Cinder, Nova | S3, EFS, FSx, EBS | Swift, XFS, EXT4, RAID10 | GPFS | SAN | NFS, SAN |

CLOUD providers

CLOUD INTERNET NETWORK

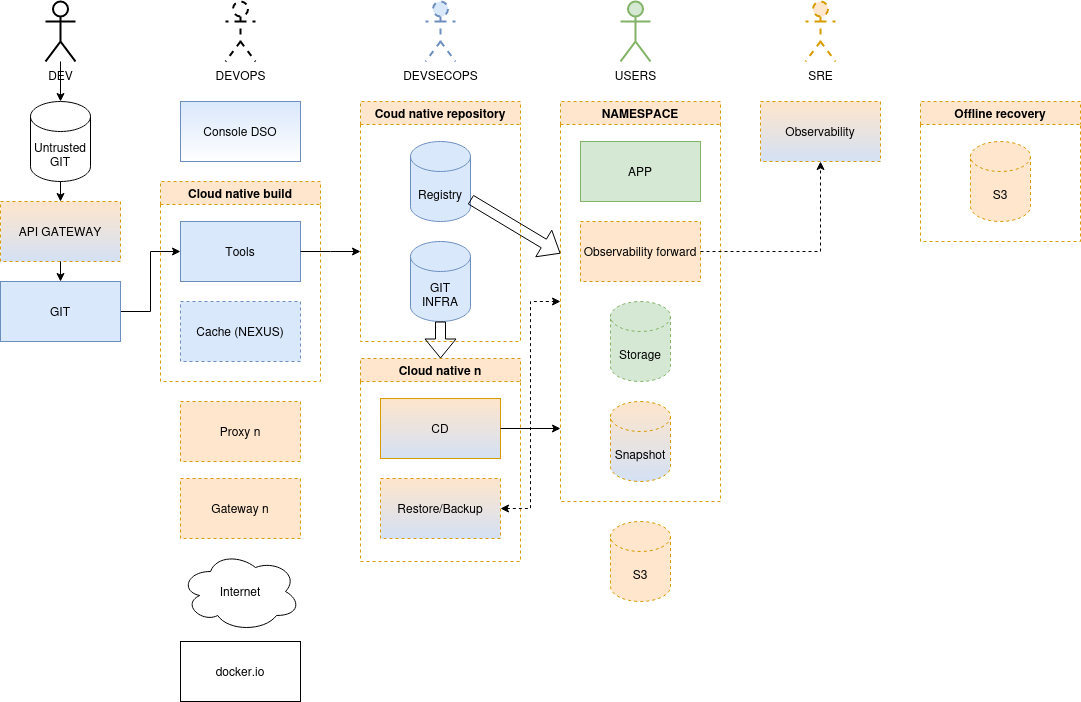

CLOUD NATIVE

- OFFICIAL STACKS

- DevSecOps :

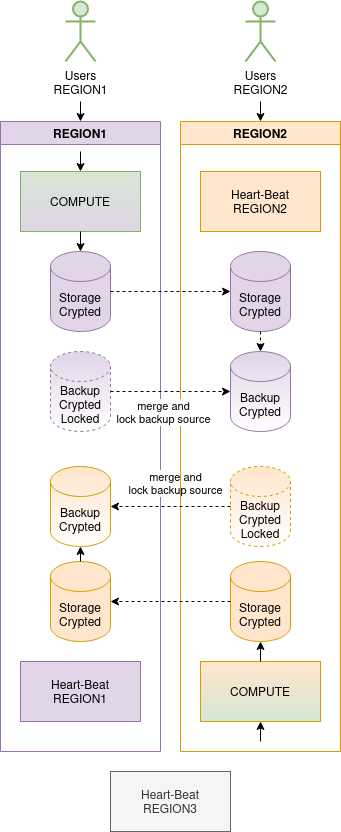

High Availability (HA) with Corosync+Pacemaker

Typical Architecture

- Dual-room.

- IPMI LAN (fencing).

- NTP, DNS+DHCP+PXE+TFTP+HTTP (auto-provisioning), PROXY (updates or internal REPOSITORY).

- Choose 2+ node clusters.

- For 2-node, require COROSYNC 2-node config, 10-second staggered closing for stability. But for better stability choose 3+ nodes architecture.

- Allocate 4GB/base for DB resources. CPU resource requirements generally low.

Typical Service Pattern

- Multipath

- LUN

- LVM (LVM resource)

- FS (FS resource)

- NFS (FS resource)

- User

- IP (IP resource)

- DNS name

- Process (Process resource)

- Listener (Listener resource)